AIGC Detection

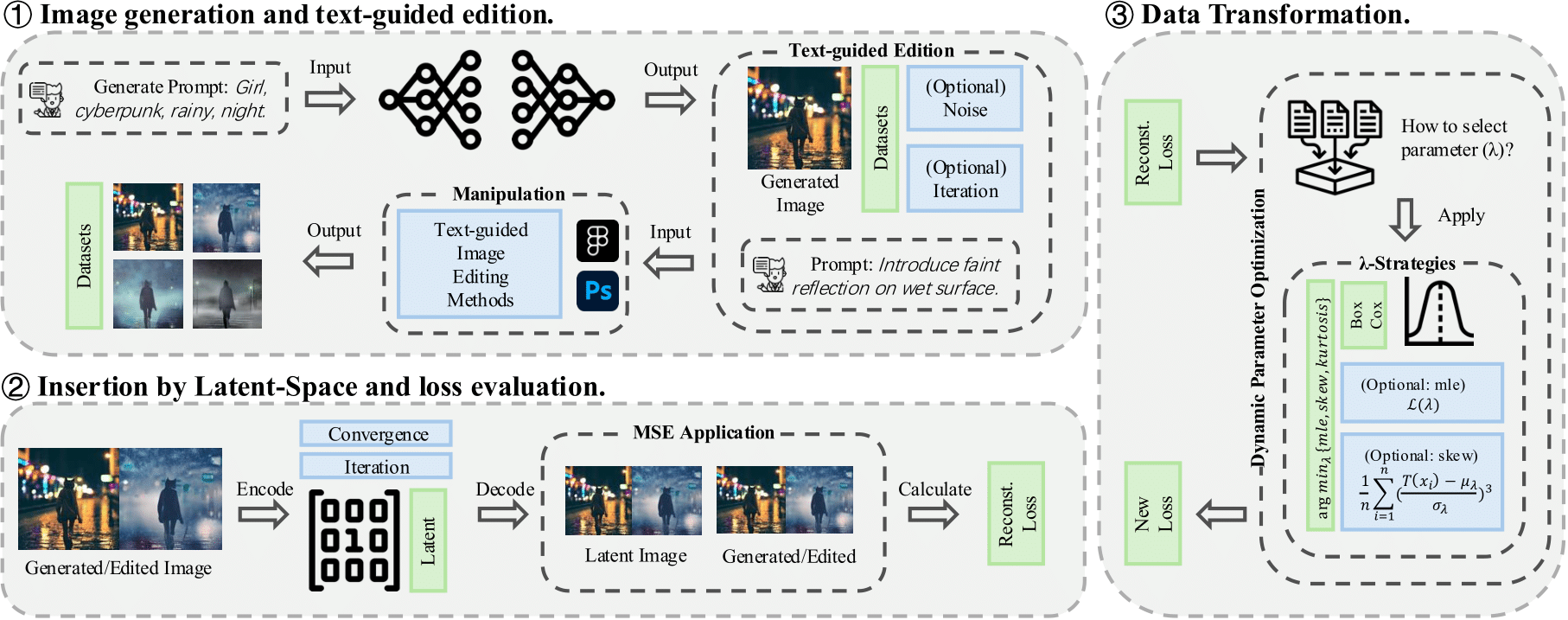

The research project introduces LAMBDATRACER, a novel method for detecting and tracing AI-generated and manipulated images without modifying generative models. By leveraging adaptive loss transformation, it effectively differentiates original content from edited versions, outperforming baseline methods in ensuring content authenticity and intellectual property protection.

With the rapid advancement of diffusion models, AI-generated content (AIGC) has become increasingly accessible and editable through text-guided image editing tools like InstructPix2Pix and ControlNet. While these technologies enhance creative applications, they also introduce significant risks, such as forgery, unauthorized modifications, and intellectual property disputes. Existing attribution methods struggle to trace iterative edits, particularly in adversarial settings where multiple modifications can obscure an image’s origin. To address this challenge, we propose LAMBDATRACER, a robust latent-space attribution method that identifies and differentiates authentic AI-generated images from manipulated ones without requiring modifications to generative or editing pipelines. By adaptively calibrating reconstruction losses, LAMBDATRACER remains effective across various editing processes, ensuring reliable provenance tracing in dynamic and open AI ecosystems.

If you want to reference our work, you can use and check the following BibTeX citation:

@misc{you2025losteditslambdacompassaigc,

title={Lost in Edits? A $\lambda$-Compass for AIGC Provenance},

author={Wenhao You and Bryan Hooi and Yiwei Wang and Euijin Choo and Ming-Hsuan Yang and Junsong Yuan and Zi Huang and Yujun Cai},

year={2025},

eprint={2502.04364},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2502.04364},

}